How to Use MiniMax M2.5 with OpenClaw

MiniMax's M2.5 is a powerful model with strong reasoning, coding, and multilingual capabilities. Connect it to your OpenClaw instance in minutes via OpenRouter and control it through WhatsApp, Telegram, Discord, or Slack.

Why Use MiniMax M2.5 with OpenClaw?

MiniMax M2.5 brings strong reasoning and extended context to your self-hosted AI agent at competitive pricing.

Strong Reasoning

MiniMax M2.5 delivers excellent reasoning and problem-solving capabilities, making it a reliable backbone for autonomous AI agent workflows.

Multilingual Support

Strong performance across Chinese, English, and many other languages — ideal for global teams and multilingual automation tasks.

Capable at Coding

Handles code generation, debugging, and technical tasks effectively — well-suited for DevOps automation and developer workflows.

Long Context Window

MiniMax M2.5 supports extended context lengths, letting your OpenClaw agent handle lengthy conversations, documents, and complex multi-turn interactions.

Cost-Effective via OpenRouter

Access MiniMax M2.5 through OpenRouter at competitive per-token pricing. One API key gives you M2.5 plus hundreds of other models — no separate MiniMax account needed.

Fast Inference

Low-latency responses mean your OpenClaw agent replies quickly through WhatsApp, Telegram, Discord, or Slack.

Setup in 4 Steps

Get MiniMax M2.5 running as your OpenClaw agent via OpenRouter in under five minutes.

Get Your OpenRouter API Key

Sign up at openrouter.ai, navigate to the API Keys section, and generate a new key. OpenRouter gives you access to MiniMax M2.5 and hundreds of other models with a single key — no separate MiniMax account required.

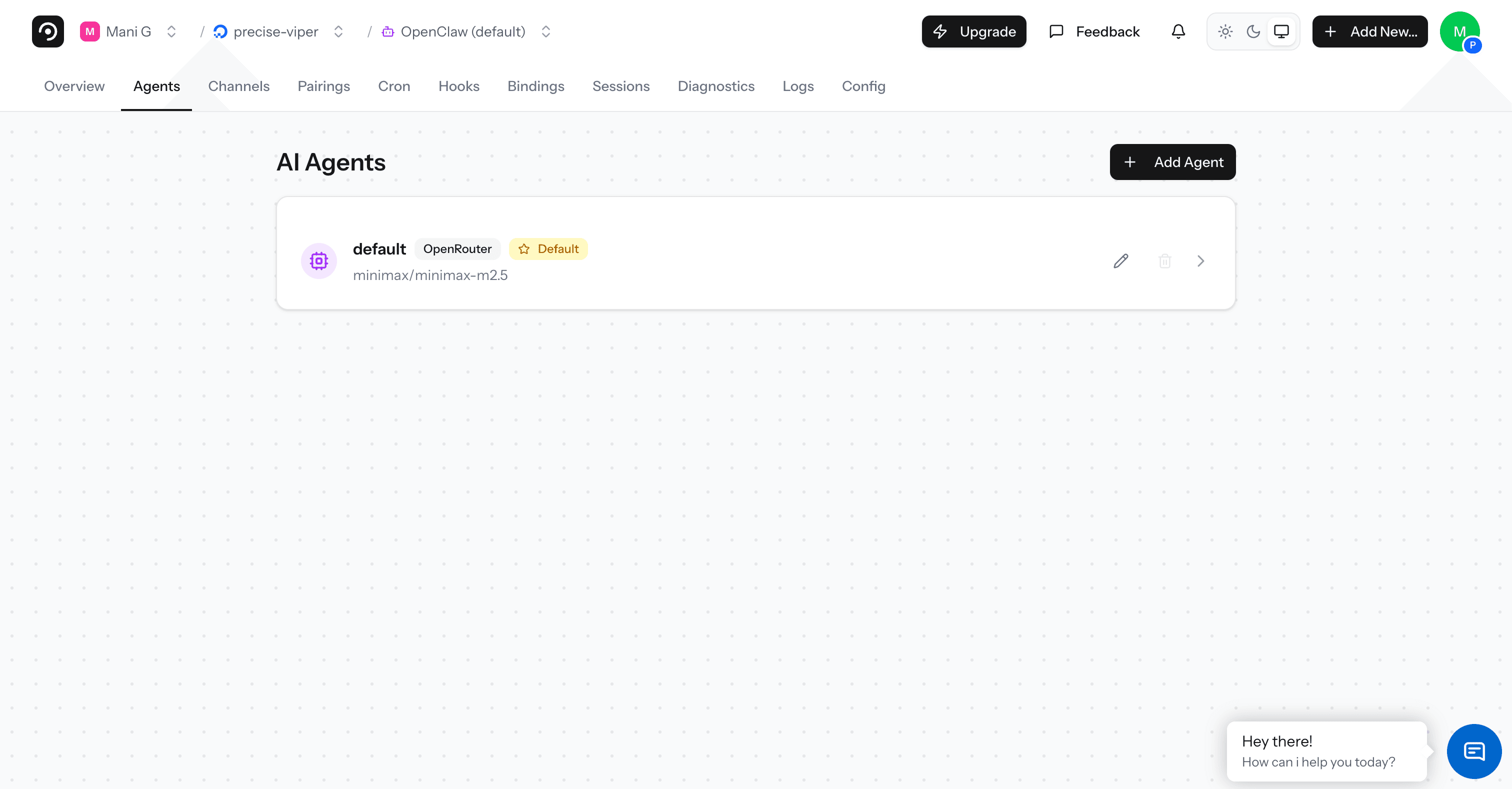

Open the DeployClaw Dashboard

Log in to app.deployclaw.com and open your OpenClaw instance. Navigate to the Agents tab from the top menu.

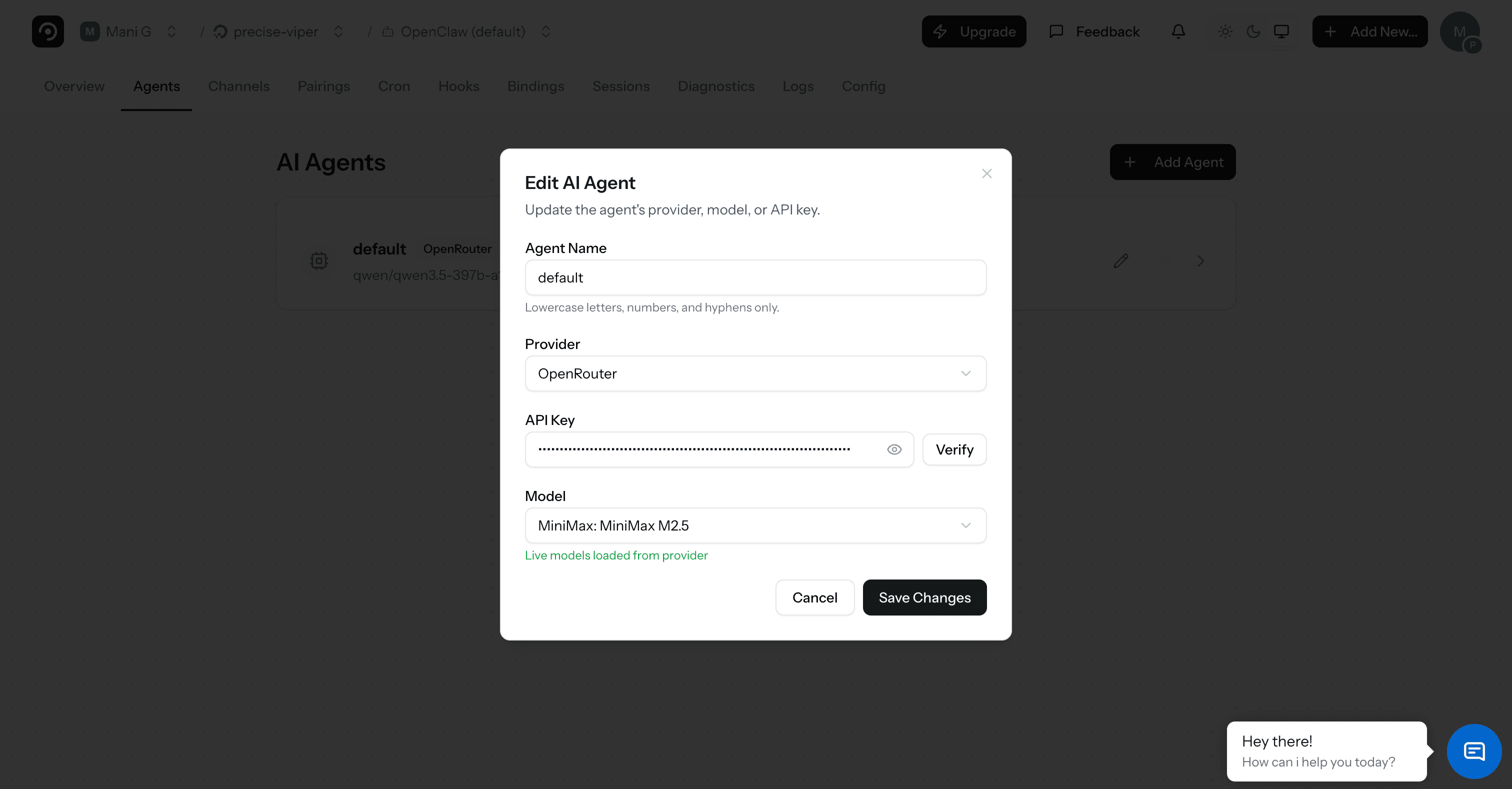

Add a New Agent

Click "+ Add Agent", select "OpenRouter" as the provider, paste your API key and click Verify. Then choose "MiniMax: MiniMax M2.5" from the Model dropdown.

Configure and Deploy

Give your agent a name (e.g., "MiniMax Agent") and click "Add Agent" to save. Your OpenClaw instance will now route messages to MiniMax M2.5 through OpenRouter.

That's it. Your OpenClaw agent is now powered by MiniMax M2.5. Send it a message through any connected platform to start.

Frequently Asked Questions

What is MiniMax M2.5?

MiniMax M2.5 is a large language model developed by MiniMax. It offers strong reasoning, coding, and multilingual capabilities with an extended context window, making it a versatile choice for AI agent workflows.

Why use OpenRouter instead of MiniMax's API directly?

OpenRouter gives you access to MiniMax M2.5 and hundreds of other models with a single API key and unified billing. No need to create a separate MiniMax account. You can also switch models instantly without changing provider settings.

How much does MiniMax M2.5 cost through OpenRouter?

OpenRouter charges per token at competitive rates based on the model. You pay OpenRouter for API usage and DeployClaw separately for the deployment platform. Check openrouter.ai for current MiniMax M2.5 pricing.

Can I switch between MiniMax M2.5 and other models?

Absolutely. Since you're using OpenRouter, switching models is as easy as changing the model selection in your agent settings. Try MiniMax M2.5, Claude, GPT, Llama, or any other model — all through the same API key.

Does MiniMax M2.5 work with all OpenClaw features?

Yes. MiniMax M2.5 works with all OpenClaw capabilities including shell access, file management, web browsing, and messaging platform integrations. It supports tool calling required by OpenClaw's agent framework.

Is my data sent to MiniMax?

When using MiniMax M2.5 via OpenRouter, messages are routed through OpenRouter to MiniMax for inference. If data privacy is a concern, you can run a local model via Ollama instead. OpenClaw supports both cloud and local models.

Start using MiniMax M2.5 with OpenClaw

Deploy your own AI agent powered by MiniMax's latest model via OpenRouter.